A teller copies a member's

email, including name, account type and branch location, and pastes it into

ChatGPT asking it to "write a professional response to this email."

While benchmarking risk

controls, your risk management specialist pastes a portion of your risk

assessment matrix into Claude with the prompt: "Can you rewrite these

control descriptions more clearly and suggest any gaps?"

Your branch manager, after a

busy morning and on deadline to submit quarterly team performance reviews and

plans by 5 pm, asks ChatGPT: "Turn these performance notes into a clear

coaching plan for my employee."

It's happening in the simple

daily rhythms of your branch — responding to member inquiries or concerns;

taking a shortcut when the next meeting starts in five minutes; and when

showing up as prepared and knowledgeable as possible will help land that next

member.

On the surface, these are the

moments that happen on an ordinary Tuesday. In reality, these are compliance

risks hidden in plain sight.

Let's take a closer look:

In the first example, the

teller left your secure IT system and pasted protected member information into

a public AI model.

In the second, the very

framework you'd present to an examiner, is now training data for an unvetted

third-party tool.

In the third scenario, an

employee's HR information (performance concerns, coaching needs) now sits on

servers you don't control and can't retrieve.

Your team isn't careless.

They're using a tool that is so accessible and convenient, that reaching for it

doesn't feel like a choice — especially when the to-do list is longer than the

hours in the day.

And let's face it, you're

running lean. Even your day includes wearing hats far outside the

"executive" lane - managing vendor relationships, writing job

descriptions, fixing a printer, explaining policy decisions, troubleshooting

Wi-Fi and even covering for vacations.

You're not alone. According to Wipfli's

2026 State of the Credit Union Industry Report, 67% of institutions are

implementing AI, but only 16% have an enterprise-wide roadmap. Essentially, the

tools are in use. The governance isn't.

The question isn't whether you

formally adopt AI — it's already embedded in how your team works. The question

is whether you can demonstrate how you're managing the associated risks.

The National Credit Union

Administration (NCUA) has prioritized three main areas that intersect with AI:

Operational risk management - this includes oversight

of critical operational domains that directly affect financial stability and

member trust.

Third-party vendor oversight - especially important

since tools you use, such as core processors, your loan origination system, and

the chatbot on your website all are AI-powered.

Fraud prevention and detection - fraud within the

U.S. financial systems remains a threat, making your internal controls and

efforts to deter and detect fraud that much more critical.

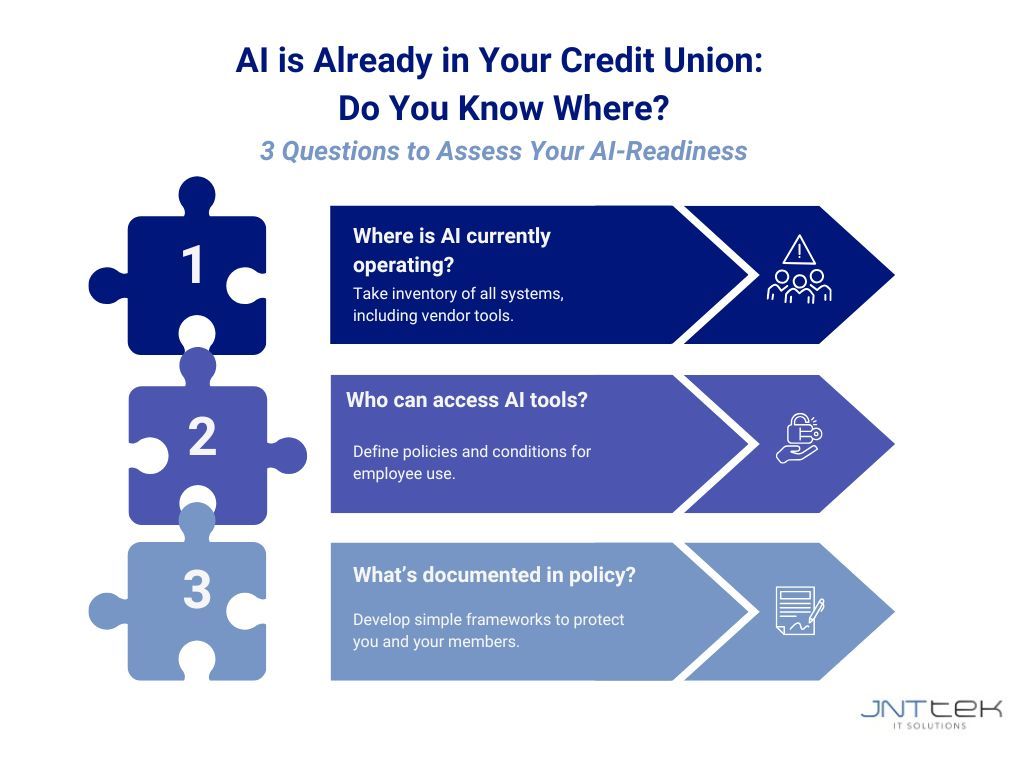

Here are three main questions

to consider as you assess your credit union's AI-readiness:

- Where is AI currently operating? Given the examples above, it's being used already, so take an inventory of all your systems, including those with vendors, to understand where AI is powering decisions or processing information.

- Who can access AI tools? Do you have a policy in place? Under what conditions are employees using tools and how?

- What's documented in policy? Are your systems and processes documented in policies? If asked, could you point to where those are? The point isn't necessarily to overly complicate things, but to have simple frameworks and policies that protect you and your members.

What does a good governance

structure look like for your organization? It's not as complicated as it

sounds, but it requires being diligent and intentional.

Have a written policy on how AI

can and should be used. Be sure it outlines who is accountable and who owns the

decisions surrounding AI. Regularly review AI use with your vendors to

understand the safeguards they currently have in place. AI is rapidly evolving

so the goal isn't perfection; it's having awareness, clarity and reasonable

controls to reduce risk.

Your team will keep reaching

for tools to help them serve your members. Rather than stop anyone from using

them, make sure guardrails are in place before you are asked by an examiner to

show them. And if you aren't sure where to start, JNTtek helps credit unions

just like yours to assess readiness and build practical frameworks specific to

your reality and risk profile.